Nvidia’s recent unveiling of the KAI Scheduler marks a significant milestone in the ongoing open-source transformation within the artificial intelligence (AI) infrastructure landscape. By open sourcing this innovative component of the Run:ai platform, Nvidia not only demonstrates its commitment to transparency and collaboration but also strengthens the ability of organizations to manage their AI workloads more effectively. The introduction of the KAI Scheduler, now available under the Apache 2.0 license, provides researchers and developers with the tools needed to optimize resource utilization in an increasingly complex computational environment. This step aligns with the broader movement towards open-source solutions that foster community engagement and innovation—a critical aspect for any technology that aspires to be at the forefront of its field.

Addressing the Challenges of Modern AI Workloads

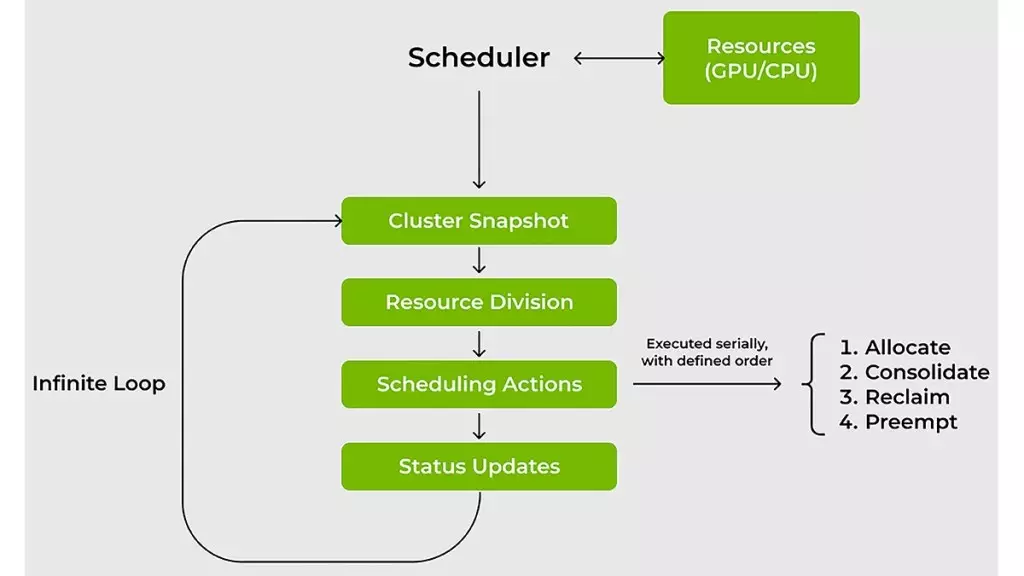

As AI continues to evolve, the demands placed on computational resources are becoming more intricate. Traditional resource schedulers have historically struggled to keep pace with the explosive growth in usage of GPUs and CPUs for AI workloads. The KAI Scheduler directly addresses these shortcomings by providing a Kubernetes-native solution that’s designed to meet the dynamic needs of machine learning (ML) and IT teams. At its core, the scheduler aims to manage fluctuating demands for GPU resources effectively. The challenges are multifaceted: workloads can rapidly shift in scale, necessitating a nimble response from resource management tools. KAI Scheduler tackles this issue head-on by continuously recalibrating allocations and limits in real time, which is crucial for ensuring that tasks are processed with minimal delay.

Enhancing Efficiency Through Innovative Scheduling Techniques

One of the standout features of the KAI Scheduler is its ability to employ sophisticated scheduling strategies such as gang scheduling, GPU sharing, and hierarchical queuing systems. These mechanisms work synergistically to minimize wait times, which is often a significant bottleneck for ML engineers. By allowing users to submit batches of jobs with the assurance that they will be executed promptly as resources become available, the KAI Scheduler liberates users from the tedious and often frustrating cycle of manual resource allocation. This approach not only enhances productivity but also allows teams to focus on what truly matters: creating innovative AI solutions.

The scheduler employs effective bin-packing and consolidation techniques that maximize compute utilization while minimizing resource fragmentation. This is a game-changer for organizations that typically face inefficiencies due to underutilization of GPUs and CPUs. The capability to intelligently reallocate workloads across nodes ensures that no resource is left idle when it could be put to use elsewhere. This dynamic resource management fosters a more collaborative environment where different teams can leverage shared computational resources without the fear of competition for GPU access.

Facilitating Interoperability Across AI Frameworks

Connecting various AI workloads with different frameworks is notoriously challenging. Teams often encounter layers of manual configurations, which can stall progress in prototyping and deployment. KAI Scheduler simplifies this complexity by integrating a podgrouper that automatically identifies and connects with prevalent AI tools such as Kubeflow, Ray, and Argo. This innovative feature helps speed up the development process, allowing researchers to focus on building algorithms rather than getting bogged down by configuration hurdles. The ability to streamline this connection is vital for organizations aiming to stay competitive in the fast-paced world of AI, where speed and efficiency are paramount.

A New Era of Resource Efficiency

In a world where AI workloads continue to skyrocket, the KAI Scheduler offers vital improvements to how resources are allocated and managed. It not only encourages responsible usage of GPU and CPU resources but also curtails the practice of hoarding resources, ensuring that teams only use what they need. With its dynamic reallocation capabilities, the scheduler promotes a culture of efficiency and cooperation, which is essential for fostering innovation in AI research and development.

Nvidia’s commitment to open sourcing the KAI Scheduler reveals its dedication to empowering the AI community at large. By embracing open standards and fostering a spirit of collaboration, Nvidia positions itself as a leader in reshaping the future of AI infrastructure. The KAI Scheduler stands poised to become a pivotal tool for IT and ML teams navigating the complexities of modern AI projects, enabling them to achieve remarkable efficiencies and breakthroughs in their work.

Leave a Reply